Strategic Lead Data Scientist with over seven years of experience architecting enterprise-scale machine learning ecosystems within the Distribution, Banking, and Education sectors. I specialize in the end-to-end orchestration of cloud-native AI—leveraging the Google Cloud Platform, BigQuery ML, and Vertex AI to transform legacy manual processes into high-performance automated pipelines.

Most recently, I led a massive digital transformation that achieved a 99.52% runtime reduction while managing a production environment of 100+ models and 200k+ unique SKUs. Beyond enterprise forecasting, I am an innovator in the Generative AI space, developing multimodal pipelines utilizing Whisper, Gemini, and ElevenLabs to solve complex narrative and data synthesis challenges.

Skills

4+ Years in the Data Science with an emphasis in supply chain.

4+ Years Experience: SQL, Python, BQ, BQML, VertexAI, DataForm, Snowflake, and Domo

2 Masters Degrees and Multiple Google Analytics Certifications.

Impact by the Numbers

- The Challenge: A legacy data process in Excel required 21 hours of manual execution time.

- The Solution: Migrated the infrastructure to a cloud-native Python environment leveraging BigQuery and Vertex AI.

- The Result: Reduced total runtime to 5 minutes, enabling near real-time decision-making for warehouse and hub operations.

- Inventory Scope: Orchestrated machine learning assortments for hundreds of store and hub locations carrying over 200k unique SKUs.

- Financial Impact: Optimized product availability and inventory depth, directly influencing millions in potential sales and inventory savings.

- Advanced Architecture: Built automated GCP Pipelines to manage transaction propensity for high-volume retail environments.

Scalable MLOps: Engineered and managed a suite of 100+ distinct propensity and demand models tailored for store-level and distribution center assortment optimization.

Champion-Challenger Framework: Implemented a rigorous validation gate within Vertex AI pipelines to compare fresh model outputs against existing “Champion” benchmarks.

Automated Quality Gates: Developed a “Pass/Fail” promotion logic using MAPE thresholds; only models demonstrating superior predictive accuracy on new data samples are promoted to production.

Google Cloud Platform Experience

Data Form & Snowflake

Enterprise Dataform Orchestration: Implemented Dataform (SQLX) to manage complex dependency trees and data transformations, ensuring high-integrity model outputs.

Cross-Platform Integration: Streamlined the delivery of curated ML outputs from GCP into Snowflake, providing a centralized “Source of Truth” for organizational consumption.

Executive Visibility: Automated the data flow from 100s of sources into Domo, delivering real-time actionable insights to C-suite executives.

Big Query

In-Warehouse Machine Learning: Leveraged BQML to develop and deploy XGBoost propensity models and ARIMA demand forecasting directly within the data warehouse.

High-Volume Forecasting: Engineered scalable modeling frameworks to predict transaction likelihood and inventory depth for 200k+ SKUs across hundreds of locations.

Process Efficiency: Achieved a 99.52% runtime reduction by migrating legacy Excel-based calculations into highly optimized BigQuery SQL and BQML environments.

Agent Platform

Automated Performance Validation: Engineered individual Vertex AI pipelines for four distinct propensity models, incorporating automated evaluation against predefined success criteria.

Model Orchestration: Architected multi-stage GCP Pipelines to manage the end-to-end lifecycle of BQML models, from automated retraining to final output curation.

Hybrid Intelligence: Combined traditional ML (ARIMA/XGBoost) with cutting-edge Generative AI (Gemini 1.5 Pro) to solve complex business logic and narrative synthesis challenges.

Recent Projects

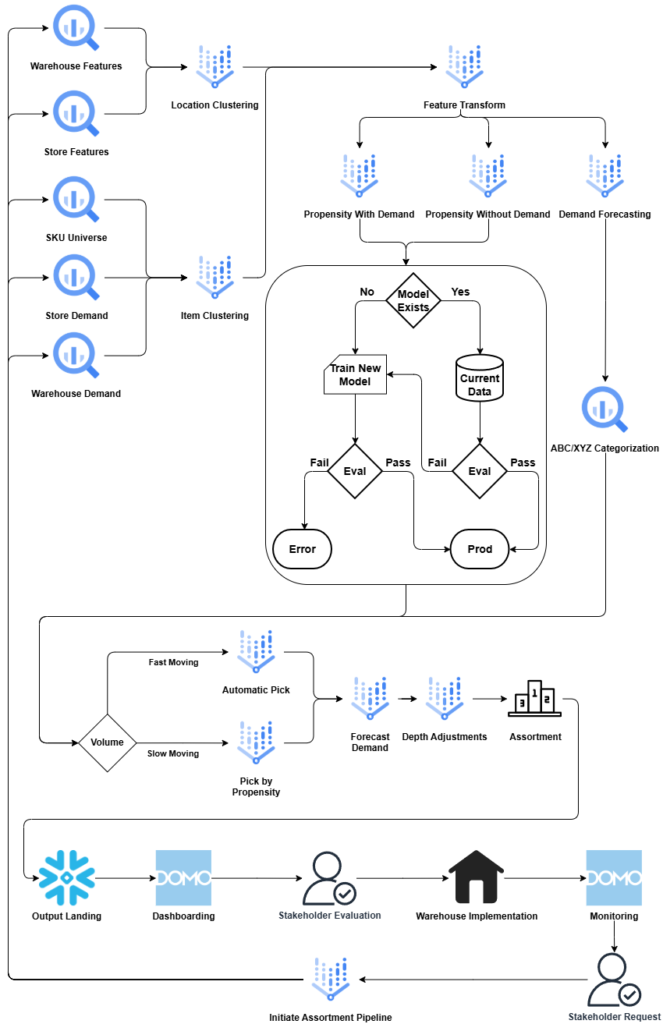

Warehouse Assortment Pipeline

Architected a sophisticated decision engine within the pipeline that automatically detects if a valid model exists for the current feature set.

Implemented a continuous evaluation loop for both new and existing models; pipelines utilize automated “Pass/Fail” logic to either promote a model to production or trigger an immediate error-log for manual intervention.

Developed a dual-path processing stream that combines propensity modeling with ABC/XYZ categorization, ensuring high-granularity demand forecasting across diverse SKU types.

Engineered automated selection logic that bifurcates SKUs into “Fast-Moving” (Automatic Pick) and “Slow-Moving” (Propensity-Driven) channels to optimize warehouse slotting and fulfillment efficiency.

Managed a multi-stage delivery system where finalized assortments are landed in Snowflake and visualized via Domo, facilitating warehouse-level implementation and real-time performance monitoring.

Tech Stack

- BigQuery & BQML

- Agent Platform

- Snowflake

- Domo

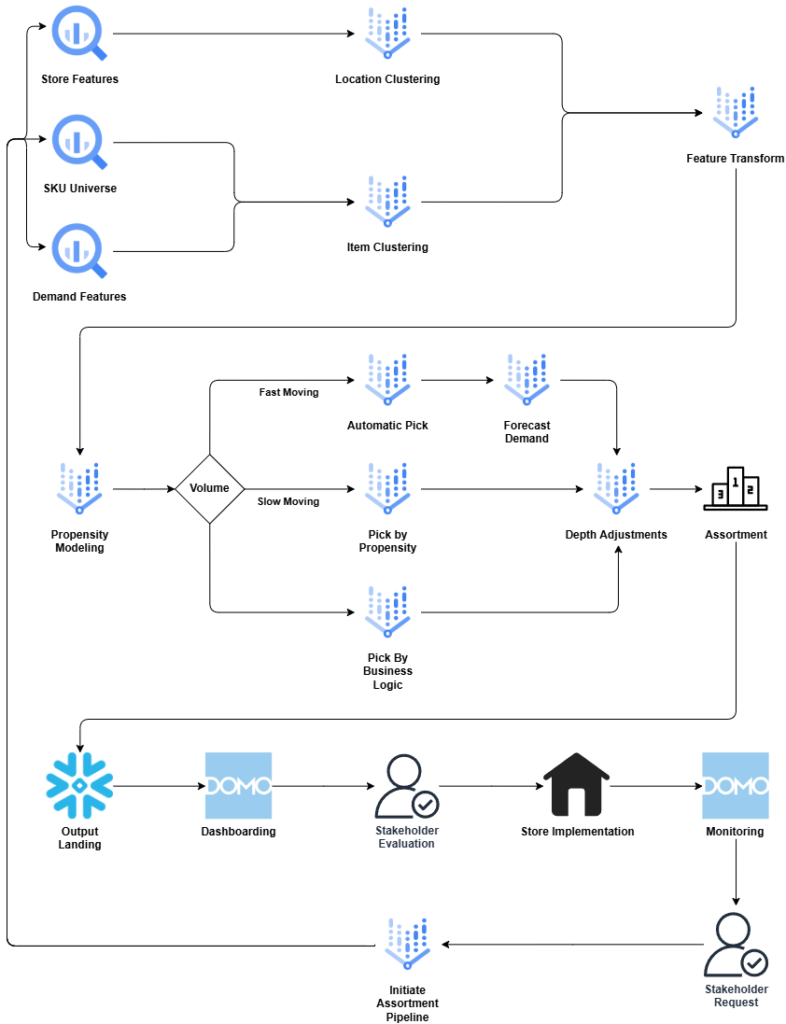

Ultrahub Assortment Pipeline

Developed a multi-layered ingestion engine that performs location and item clustering to transform store features, demand signals, and SKU universes into high-dimensional feature sets.

Engineered a dynamic decision engine that routes SKUs through specialized logic paths based on volume:

Fast-Moving: Automated selection paired with ARIMA forecasting for precision demand planning.

Slow-Moving: Selection driven by high-performance XGBoost propensity modeling.

Business Logic: Manual overrides for strategic SKU placement and specialized constraints.

Built automated logic to calculate inventory depth adjustments post-selection, ensuring that the final assortment is optimized for both variety and availability.

Architected an end-to-end feedback loop where model outputs land in Snowflake for Domo dashboarding, allowing for stakeholder evaluation and store implementation before re-initiating the pipeline based on new stakeholder requests.

Tech Stack

- BigQuery & BQML

- Agent Platform

- Snowflake

- Domo

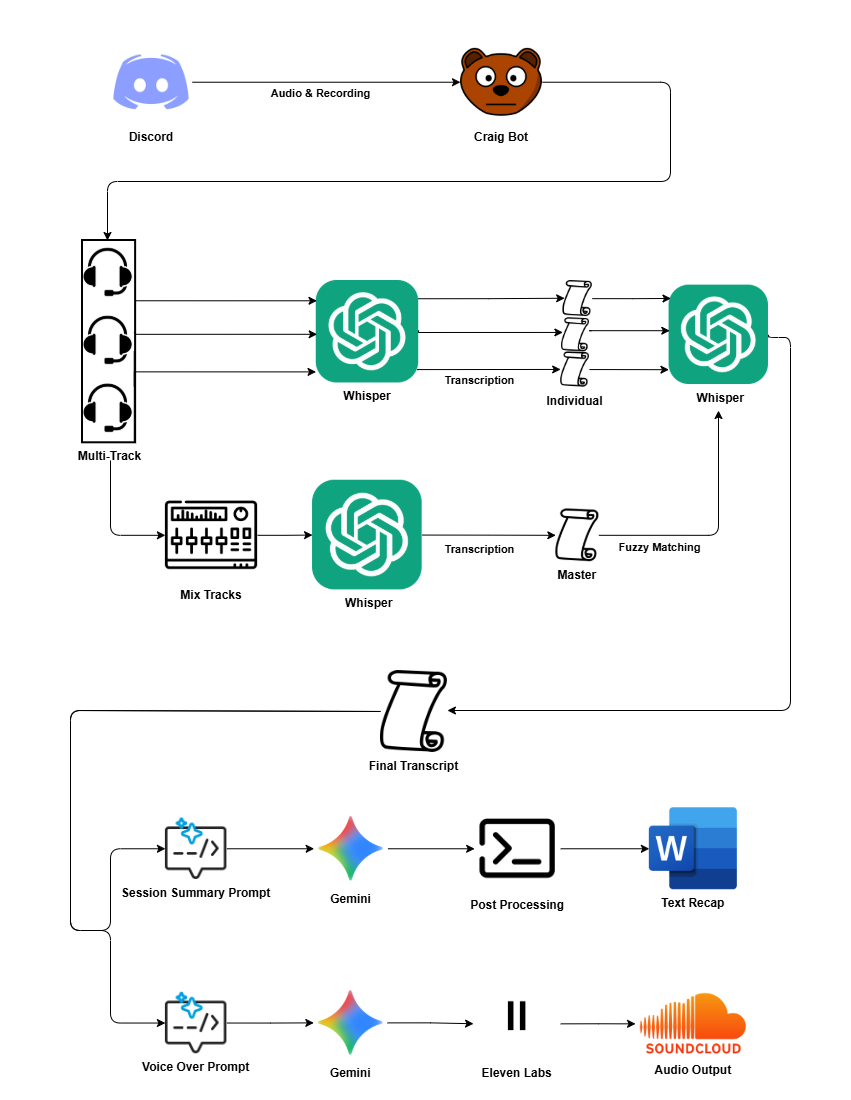

D&D Recap | Multimodal Narrative Intelligence Pipeline

Engineered a custom pipeline to process multi-channel Discord audio using OpenAI Whisper, implementing speaker identification and diarization to convert raw audio into structured scripts.

Leveraged Gemini 1.5 Pro’s long-context window to ingest extensive session scripts, utilizing advanced prompt engineering to generate consistent narrative recaps and stylized “narrator-perspective” scripts.

Integrated ElevenLabs API with a custom-trained voice clone to automate the production of high-fidelity audio recaps, delivering a professional-grade narrative experience.

Built the end-to-end workflow to handle the transition from unstructured audio data to polished, multi-format (text and audio) creative assets.

Tech Stack

- Discord

- Whisper

- Gemini

- ElevenLabs

Contact

Please reach out to me via Linkedin with any questions or job opportunities!